Dirty Pagetable

Exploiting a file UAF in the linux kernel with the Dirty Pagetable technique, to get arbitrary write primitive on the physical memory.

Overview

Last year, in december, me and my team thehackerscrew have participated in the m0lecon2023 ctf finals, which was held in Turin, Italy.

There was a really cool kernel challenge, Kinda similar to CVE-2022-28350, which was all around exploiting a UAF on a file structure. In this blog, I will explain the Dirty Pagetable technique, and how it can be used to exploit many UAF vulnerabilities in the kernel.

Vulnerability Analysis

the vulnerability in the CVE was found in the kbase_kcpu_fence_signal_prepare function, in the /kernel/drivers/gpu/arm/midgard/csf/mali_kbase_csf_kcpu.c file:

| |

The function will allocate a file structure on the linux kernel heap, and call fd_install to associate this file structure to a new fd.

Then, the copy_to_user sends the allocated fence object to the userspace, and if it fails, it will call fput(sync_file->file);, which decrements the ref_count of our file structure. Since the file has been just created and not used in other places, the ref_count will be 0 and the file structure will be freed from the heap.

This flow results in a UAF, because the new fd is still associated with this file structure, even though it is freed.

Exploitation

To exploit this bug, I will use the kernel module from the challenge in m0lecon, because it is more striaght forward to interact with, and has the exact same bug.

Here is the vulnarable code, which is pretty much the same:

| |

It will create anonymous file named [easy], and will assign a fd to it.

If the copy_to_user call fails, the file structure will be freed, but we we can still use the assigned fd in userspace, so we have an UAF.

Trigger the bug

To trigger the bug, we just need to open the vulnarable device, and make the copy_to_user call fail.

We can do it by giving an invalid arg parameter, which is not mapped to the userspace virtual memory:

| |

Since fds for a process are usually incremental, we can guess that the associated fd of the freed file structure is dev_fd+1, since it is the only file allocated in the kernel after the open call.

Then, to compile it and run it in the vulnarable qemu machine, I created thie bash script to compile the exploit and put it in the filesystem of the qemu:

| |

Then when we run it, we get a kernel panic:

| |

Ok, so we have a UAF in the linux kernel heap. how do you exploit such bug?

Understanding the Linux Kernel Memory Allocator

The Linux Kernel, is required to have a unique, fast and efficient memory allocator.

Unlike glibc malloc, the kernel needs a different kind of approach, more integrated with the system itself, and conformant to DMA restrictions.

In the linux kernel , the allocators are built upon slabs.

A Slab, is a set of one or more contiguous pages of memory that contain kernel objects of a specific size.

Those slabs, are then grouped to multiple Slab Caches, which are containers of multiple slabs of the same type.

There are 2 types of Slab Caches:

Generic Slab Cache- General purpose caches that can hold any object of a specific size. these are the slab caches of memory allocated bykmallocin the kernel. for example, ifkmalloc(32)has been called, this will return a pointer to aGeneric Slab Cachecalledkmalloc-32.Dedicated Slab Cache- Those are slab caches dedicated to a specific type structures. those are usually things that are allocated frequently, so the kernel stores it all in 1 slab cache. For example, file strucutres have their own dedicated slab cache, calledfiles_cache.

There are multiple slab allocators available in the Linux Kernel: SLUB, SLOB, SLAB, but usually, the SLUB allocator is the default one.

The SLUB allocator is pretty much like SLAB, but it has better execution time than the SLAB allocator by reducing the number of queues/chains used.

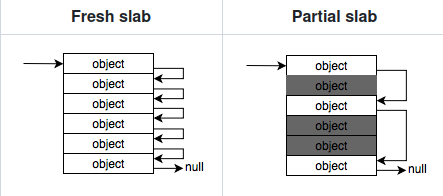

A cache’s slabs are divided into three lists; a list with full slabs (i.e slabs with no free slots), a list with empty slabs (slabs on which all slots are free), and a list with partial slabs (slabs that have slots both in use and free).

In SLUB, the fd pointer to the next free object is stored directly inside the object itself, requiring no additional space for metadata and achieving a 100% slab utilization.

Each slub will have its unique data structure called kmem_cache that keeps track of the slub metadata.

There is one and only one kmem_cache for each object type, and all slabs of that object are managed by the same kmem_cache. These structs are linked to each other in a double linked list, accessible through the slab_caches variable.

Understanding the kmem_cache structure

Each kmem_cache struct will have 2 kind of pointers to keep track of its slab.

It will contain an array of kmem_cache_node, and a pointer to kmem_cache_cpu.

The kmem_cache_node array keeps track of partial and full slabs that aren’t active. They will be accessed incase of a free, of when an active slab(pointed by the kmem_cache_cpu) gets filled up and another partial needs to take its place.

The kmem_cache_cpu pointer is responsible for managing the active slab. it’s only one and it’s relative to the current cpu. The ptr returned from the next allocation will be returned from this active slab, by taking the head of its freelist pointer.

Understanding the Buddy Allocator

The idea of the buddy allocator is to allocate and manage physical memory efficiently. It does it by dividing the memory into fixed-size blocks (usually 2^n * PAGE_SIZE), and whenever a memory is requested, the system finds the smallest available block that can accommodate the requested memory size.

The main problem of the buddy allocator is internal fragmentation:

Since the buddy system allocates memory in blocks that are powers of two, there’s often a mismatch between the allocated block size and the actual memory requirement. For example, if an application requests 260KB of memory and the nearest power-of-two block size is 512KB, the system allocates the 512KB block, resulting in 252KB of unused space within the allocated block. This unused space represents internal fragmentation.

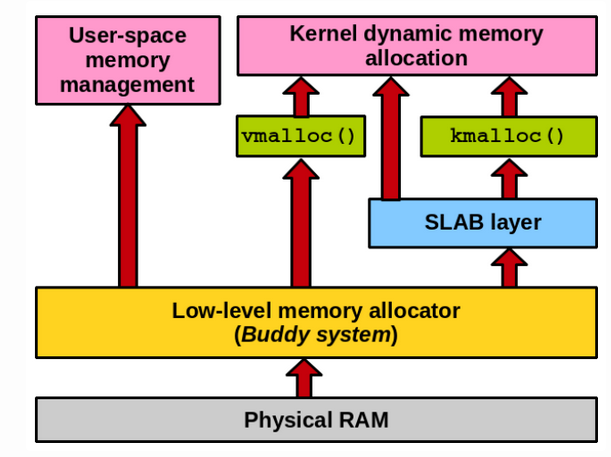

In Linux, this problem is addressed by sliceing pages into smaller blocks of memory (slabs) for allocation.

Here is how the structure of the layers of memory allocators in the linux kernel:

All the structure that are used by the slub allocator (i.e kmem_cache, kmem_cache_node) will be allocated directly from the buddy allocator, this piece of information is important for the exploitation of this bug.

Exploitation

So we have a UAF bug on the dedicated files_cache slub cache.

There isn’t really interesting stuff we can overwrite there, and file structures doesn’t contain anything we would want to tackle with.

To overcome this issue, we can do a Cross-Cache Attack.

Cross-Cache Attack

In the cross cache attack, we will free the file object that was created and their own page to return to the buddy allocator. After that, we can allocate another object from another dedicated slub cache, the buddy allocator will allocate the new slub cache over the previously file structure slub cache, and we’ll have our UAF on an object of other type.

To exploit this bug, I have used the Dirty Pagetable technique.

In this technique, we use our cross-cache attack over the pte’s dedicated slub cache.

When calling mmap, a new pte will be allocated, storing usefull stuff like physical memory address.

If we can have our UAF target this structure, we can change this address, to gain a better primitive.

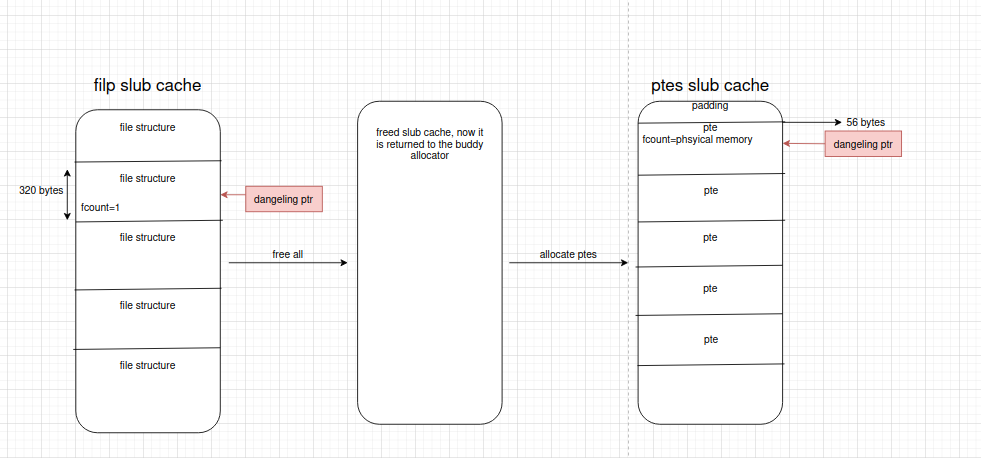

So now lets trigger our UAF on a PTE structure.

we first, call tons of times to mmap, to let the MMU know that these addresses will be used by us. At this stage, the kernel haven’t allocated our PTE’s yet, it will only when a page fault occurs, so when they are accessed via userspace.

Then , we spray tons of files, by opening a random file. We set one of them to be our UAF’ed file. This will fill up the dedicated slub cache.

Now, when we free all those structures, the buddy allocator will free this slub cache, and this memory will not be used.

Then, when we actually allocate the pte’s, the slub allocator will allocate a new dedicated slub cache for the PTE’s, over the previously freed slub cache, which was the file structures slub cache.

Since we can still use one of the files there, we can corrupt data in the PTE struct!

Here is how we trigger this attack:

| |

Here is how the file structure looks like:

| |

One good field here is the f_count field, which represents the reference count of the file object.

When calling the dup syscall on a specific fd, the kernel will assign a new fd to this file structure, and will increment the f_count of this file structure.

With our UAF, we can let the position of the victim PTE coincide with the f_count, then we can perform the increment primitive to the victim PTE and control it to some extent.

The aligned size of a file object is 320 bytes, the offset of f_count is 56, and the size of f_count is 8 bytes.

The size of the slab of filp kmem cache is two pages, and there are 25 file objects in a slab of filp kmem cache.

We would want to shape the heap to look something like this:

So now, we have an increment primitive over the physical address of a PTE.

This is not enough to win, because we can’t simply overwrite kernel text/data, because the physical address of the memory region allocated by mmap() is likely greater than the physical address of kernel text/data.

But still , we can write pretty much to any place we want after the pte’s in the physical memory.

The dma-buf subsystem provides the framework for sharing buffers for hardware (DMA) access across multiple device drivers and subsystems, and for synchronizing asynchronous hardware access.

with the dma-buf system heap, We can share physical pages to be mmaped into kernel space and user space at the same time.

We can open the DMA device with open("/dev/dma_heap/system"). By calling DMA_HEAP_IOCTL_ALLOC ioctl to this device, we can allocate a memory that can be mapped to userspace.

When allocating a page with this ioctl, its physical address will be allocated close to our victim PTE.

Since we already know which userspace page is the one corrupting the PTE, we can munmap it and allocate the dma-buf page to make f_count overlap with the PTE entry for the dma-buf page.

We can fully edit our dma-buf in userspace, so we have the primitive to fully control the PTE structure (we changed its physical memory address). So we can basiclly just change its physical memory pointer, and gain an arbitrary read/write primitive in the physical memory.

With that primivive, we can simply overwrite the machine code of the setresuid syscall, to not actually check the userspace permissions.

Here is somewhat of what the exploit will look like:

| |

Took a lot of inspiration from ptr-yudai blog exploit, so thanks a lot for that!